Why Static PAR Levels Are Broken in Modern QSR Operations

Static PAR levels are an old answer to a modern problem.

They assume demand is stable enough that a fixed target inventory or prep level can sit on top of historical averages and still deliver acceptable waste, availability and labour outcomes. That assumption breaks fast in QSR.

Modern QSR demand is not stationary. It is state-dependent, highly local, promotion-sensitive and shaped by external signals that move faster than a human planner or a weekly spreadsheet can react to. Weather moves demand. Traffic moves demand. Local events move demand. Competitor actions move demand. Promotions reshape both absolute demand and the mix between products. And once you move from menu demand to ingredient demand, the system becomes even more nonlinear.

A static PAR level ignores that structure. It treats a conditional forecasting problem as a fixed threshold problem.

The static PAR formulation

In its simplest form, a static PAR policy for item $i$ is:

Where:

- $\mu_i$ is average demand per period

- $L_i$ is lead time or replenishment horizon

- $SS_i$ is fixed safety stock

Sometimes this is dressed up slightly:

That is still static. You may have day-of-week segmentation, maybe even hour-of-day segmentation, but the core assumption remains that the future is mostly a repeat of the recent past.

That assumption only works if all of the following are roughly true:

- demand is close to stationary

- forecast error variance is stable

- promotions have repeatable effects

- external drivers are weak or average out

- sales are a good proxy for true demand

In a real QSR estate, none of those hold consistently.

What the model should actually be solving

The real problem is not to set a static stock target. It is to estimate a conditional demand distribution and optimise against it.

For store $s$, item $i$, and decision time $t$, the target is:

Where:

- $D_{s,i,t:t+H}$ is demand over the planning horizon

- $X_t$ is the state vector at decision time

And $X_t$ should not be a thin feature set. In a serious QSR system it includes at least:

- calendar structure: hour, day, week, month, holidays, school terms, payday cycles

- weather: temperature, rainfall, humidity, wind, heat index, deviation from local norm

- traffic and mobility: road congestion, local footfall, travel time distortions, catchment activity

- events: stadium fixtures, concerts, transport disruption, retail park events, festivals

- competition: nearby openings, closures, local price changes, meal deals, delivery coverage shifts

- promotions: discount depth, channel, creative type, bundle mechanics, start and end boundaries

- operations: labour coverage, open hours, channel mix, kiosk uptime, delivery marketplace availability

- inventory state: on-hand, age, expiry window, waste risk, inbound deliveries

This is where static PAR collapses. It compresses a high-dimensional conditional problem into one fixed threshold.

Demand is conditional, heteroscedastic and censored

A more realistic baseline for menu-level demand in QSR is a count model with state-dependent mean and variance. For example:

Where $m$ is a menu item and the $f()$ terms can be linear, spline-based, tree-based, or a hybrid depending on the stack.

The important point is this: variance is not constant. A rainy Tuesday morning and a bank holiday lunch with a delivery offer do not have the same error structure. The distribution changes with the state.

And observed sales are often censored:

Where $A_t$ is available stock or capacity.

If a store stocks out, you do not observe true demand. You observe the lower of demand and availability. If you train a static PAR rule, or even a naive forecast, on censored sales without correction, you will systematically underestimate demand in exactly the periods where demand is strongest. That pushes the next PAR down further and locks in chronic under-ordering.

For advanced operations, demand estimation needs explicit stockout handling, either through censored likelihoods, imputation, or counterfactual demand recovery.

The multiple-source data model matters

Single-source forecasting is not enough for QSR. Historical EPOS data alone is too slow and too incomplete. It can tell you what happened, but not why demand moved, nor what is about to move it.

A stronger architecture is a multiple-source demand model, where each source contributes a different part of the demand signal.

At a practical level:

- Weather captures appetite shifts, mission shifts and channel substitution. Heat affects cold drinks, rain affects footfall, storms affect delivery mix.

- Traffic captures access friction and catchment flow. A store with strong nominal demand can still miss sales when road conditions suppress arrival volume.

- Events capture local demand spikes and shape distortion. A concert, fixture or school event changes both volume and basket composition.

- Competition captures share displacement. Nearby offers, new openings or local closures can move demand in ways that static averages will miss.

- Promotions capture intentional demand shocks, but also mix effects, cannibalisation and post-promo decay.

For a real QSR estate, these signals should not sit in separate dashboards. They should feed the same feature pipeline, aligned in time and geography, and trained against the same forecasting target.

A robust feature set might include:

x_t = [

temp_t, rain_mm_t, temp_anomaly_t,

traffic_index_t, travel_time_delta_t,

event_intensity_t, competitor_promo_score_t,

own_discount_depth_t, own_bundle_flag_t,

holiday_flag_t, school_break_flag_t,

hour_of_day_t, channel_mix_t-1,

lag_1, lag_7, lag_28,

rolling_mean_7, rolling_std_28

]That is the level where the problem becomes predictive rather than reactive.

Promotions break naive PAR logic

Promotions are one of the clearest reasons static PAR is broken.

A promotion does not just lift demand. It can:

- shift volume forward in time

- change channel mix

- substitute one menu item for another

- change basket attachment rates

- alter ingredient consumption nonlinearly

- create post-promo demand softness

A weak model treats promo as a binary flag. A better model estimates uplift as a function of promo mechanics and context:

Where $u()$ captures promotion uplift conditional on state.

But even that is not enough if the business wants true causal understanding. Promotions are not randomly assigned. They are often targeted to periods already expected to perform differently. That means:

For an advanced stack, promo modelling should correct for selection bias and estimate true uplift. Depending on the environment, that may involve doubly robust estimation, uplift models, causal forests, or carefully structured quasi-experimental methods.

And at ingredient level, the effect needs to propagate through the recipe graph:

Where $a_{i,m,t}$ is the recipe coefficient linking ingredient $i$ to menu item $m$.

Once promotions change menu mix, they also change the consumption pattern of shared ingredients. Static PAR at ingredient level misses this unless it explicitly models the menu-to-ingredient mapping.

The correct decision variable is not PAR. It is the optimal quantile

Once you have a conditional demand distribution, the decision problem becomes an optimisation problem.

If $q$ is the order or prep quantity and $D$ is random demand, a simple single-period objective is:

Where:

- $c_w$ is overage cost or waste cost

- $c_s$ is shortage cost or lost margin and service penalty

- $c_h$ is holding cost

- $c_x$ is spoilage or compliance cost

If the cost structure is simplified to a classic newsvendor form, the optimal policy is a quantile:

That alone shows why static PAR is weak. The correct target is not a fixed average plus a fixed buffer. It is a state-conditioned quantile of the future demand distribution.

And in QSR it often gets harder still because the system is multi-period and perishable:

Where $W_t$ is waste. For hot hold items, fresh prep items and short-life ingredients, waste is not just excess stock. It is a function of elapsed time, thermal exposure and operational handling.

That means the real policy should usually optimise across both order quantity and prep timing.

Why this matters in QSR specifically

In QSR, waste is often treated as an operations issue. It is not. It is usually an estimation and optimisation issue.

If the model does not understand external demand drivers, promo mechanics, stockout censoring and recipe propagation, then store teams are forced to compensate with judgement calls. Sometimes that works in one site with a strong manager. It does not scale across an estate.

Static PAR levels persist because they are easy to explain and easy to operate. But ease of explanation is not the same as correctness.

For advanced operators, the stack should move from:

- fixed thresholds

- to conditional forecasting

- to probabilistic optimisation

- to closed-loop learning from realised outcomes

That is the difference between inventory control as a rule of thumb and inventory control as a data science system.

Final point

Orderly does this.

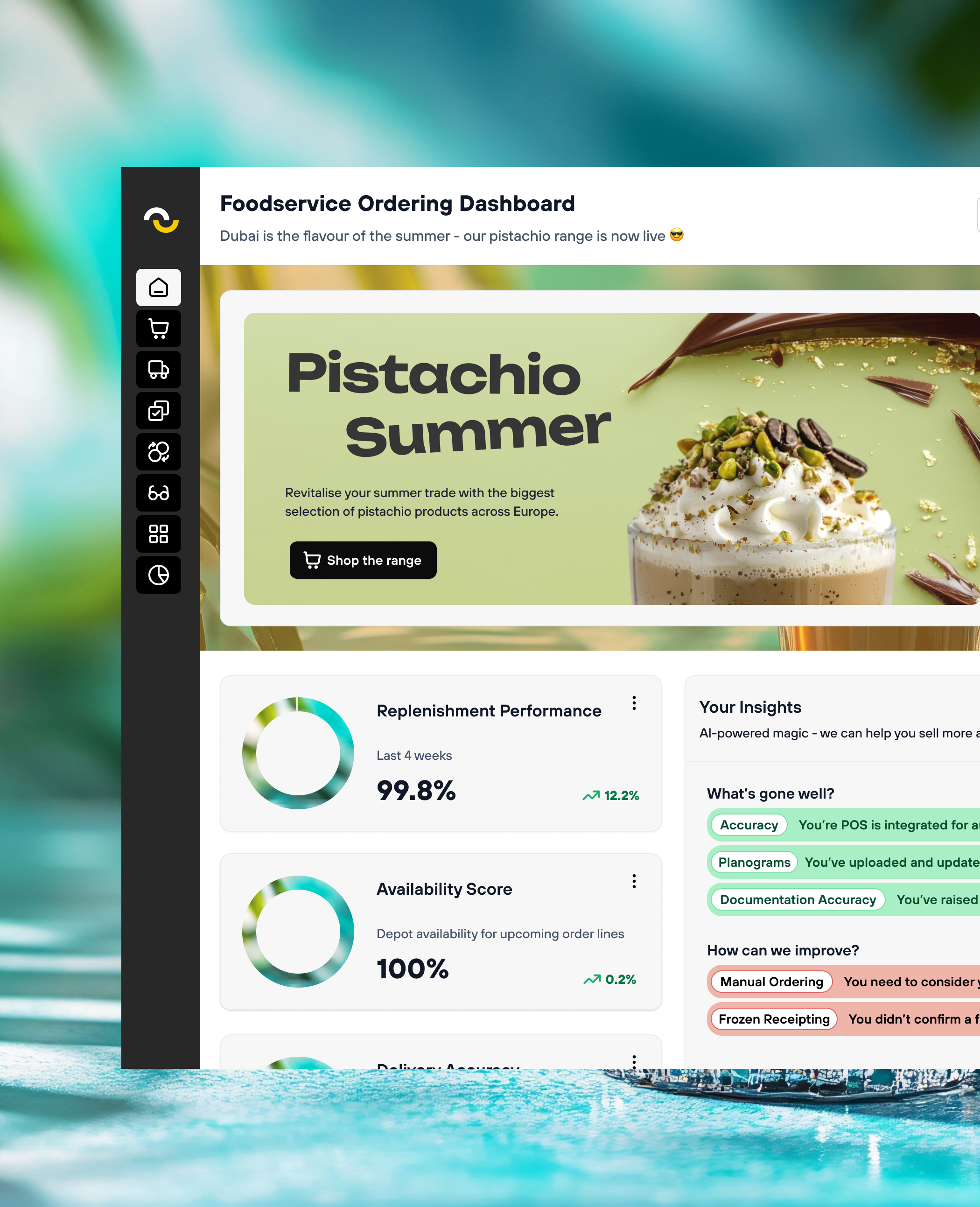

Orderly uses a multiple-source data model that combines internal and external signals such as sales history, weather, traffic, local events, competitive context and promotions to generate a richer demand view for QSR operations. That demand signal can then be used to drive smarter ordering and prep decisions than a static PAR model ever could.

.svg)

.svg)

.svg)